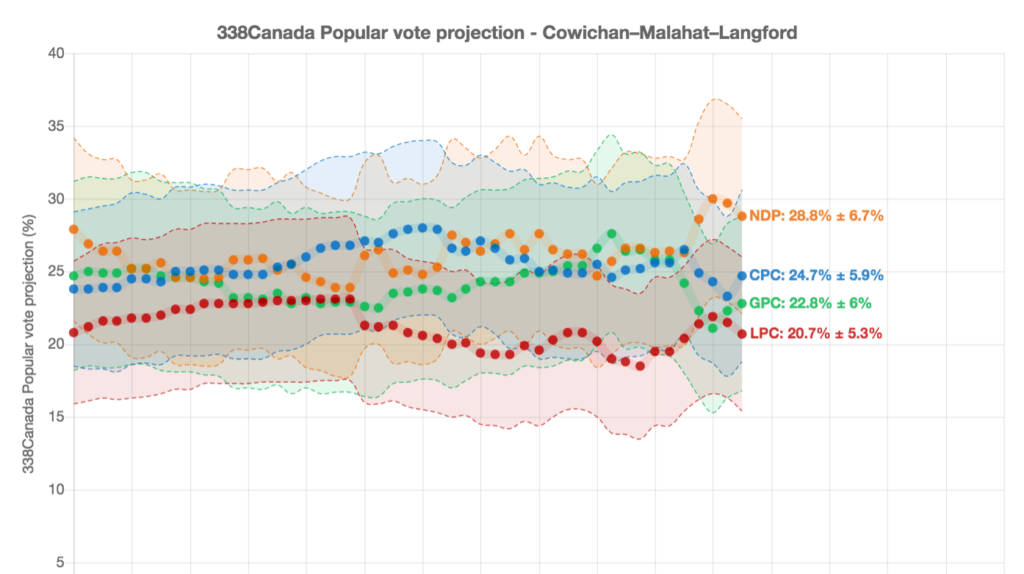

The Discourse Cowichan’s David Minkow recently dug into the federal election’s tight, four-way race in the Cowichan-Malahat-Langford riding. He relied on election predictions from Philippe Fournier of 338Canada to tell that story. But how much can we trust those predictions? 338Canada says it correctly identified winners at least 90 percent of the time in three recent provincial battles. But polls famously failed to predict the election of U.S. President Donald Trump in 2016.

Can we trust the polls? David asked B.C.-based pollster Angus McAllister, founder of McAllister Opinion Research. He has done political polling in the United States, Canada and overseas. Here’s an edited version of their online conversation.

How much of a grain of salt should the public take when seeing polling figures from, say, 338Canada about their local riding?

Generally, I’d say that 338Canada is “good enough” to be a useful tool for answering the question of “which parties are/are not in play?” in most ridings. The team behind the site is independent and has demonstrated competence over the years at making data-based inferences about local-regional effects from aggregated national surveys. That being said, the numbers are only “estimates” and they are trying to predict the behaviour of one of the most fickle, irrational species on the planet: human beings.

So these “estimates” are not going to get the numbers right in all ridings, and they are going to be less reliable in swing ridings experiencing rapid demographic change — like those on Vancouver Island. And … it stands to reason that inferences about a local race from 2-3 professionally-designed local surveys are going to be more reliable than inferences made from national polls.

What about the national predictions? Can we trust those polls?

If you look at 338’s national numbers as being about probability, they are going to be fairly reliable. They suggest that (1) there is unlikely to be a landslide by the Conservatives or Liberals (2) Despite the blackface, Trudeau is still in play (3) Ontario, Quebec and the Maritimes are Liberal strongholds and B.C. is contested territory. And they offer nothing on the actual issues and motives driving the electoral behaviour of Canadians.

The parties own polling tends to be more accurate than public polls, right?

Yes. At least until the final few days before the ballot. Public polls, aka the election polls you see reported in the news media, tend to be very low budget compared to internal polls. That is because in the weeks and months before the ballot, news organizations have nothing to lose by getting polls wrong. For them, polling is info-tainment. It draws eyeballs and helps them sell.

Political campaigns, on the other hand, view internal party polls as critical intel. They need polling to help inform where to put scarce resources, to identify the voters they’re reaching/not reaching, to assess what is working/not working, and to know where they’re at compared to their rivals. Polling is an investment in survival. They can’t afford to cut corners.

I know that a while back, people with cell phones were underrepresented in polling. Is that still an issue?

Mobile interviews are a key component of phone surveys. However, I do not think the cellphone issue is as much as an issue as it once was. This is because most surveys nowadays are conducted online.

If you’re looking at a phone survey, you should ask if cell phones were called. But any competent professional pollster will have that figured out. So it’s not really an issue.

The bigger sampling issues generally derive from doing a poll over too short a time and undersampling certain demographics. For example, if you do a poll on a Friday and Saturday night, then you’re likely to oversample older adults and undersample young adults. And in many parts of Canada, that means oversampling Conservatives. Or if your poll is too long or poorly designed, you run the risk of oversampling university graduates, who — the data suggests — inexplicably have more patience for longer, more wordy surveys. And, in such cases, you are likely oversampling Greens and Liberals. Unless you’re in Vancouver, in which case, it may not matter.

What you think is the public’s biggest misconception when it comes to polling?

The public’s biggest misconception about polling is that the opinion research firms like to make up or manipulate numbers to keep their clients happy. Anyone who thinks that does not know the business. Opinion research is a form of data-based modelling, and it is very expensive. Organizations hire us to provide accurate and reliable tracking of what is really going on in the public mind and the market. They need to know what the public thinks and feels, and they need to know what’s driving it, and where it’s going. That’s because any organization spending millions of dollars on products, services, or programs for humans, you need data on humans to inform your decisions. If researchers just made up the numbers, clients wouldn’t pay you. And, trust me, if you get it wrong, they figure it out pretty fast. To stay in business, our clients have a very strong incentive to get it right. And as such, so do we.

How much has election polling improved since you started out as a pollster? What are the primary improvements?

Well, if you’re interested in the data, as opposed to newspaper headlines, post-election reviews of all public polls suggest that the accuracy of polling has increased over the past few decades. This seems counterintuitive because of declining participation rates and the increasing difficulty of achieving a truly probabilistic sample. But improvements in modelling and experience over time seems to have helped counter the deficiencies. And the real value of polling is less the horse-race numbers, it is more about insights into motivation, the stuff that lies beneath the surface. And for that, I would say that increasing the diversity of the people behind the polls, the people developing the insights and questions, has no doubt really helped. Because the heart of good opinion research is the questions, not the answers.

I read the AAPOR report on why polls failed to predict U.S. President Donald Trump’s election in 2016. One of the things that stood out was the challenge of capturing the intentions of voters who don’t decide until the last minute. Has that become more of a challenge lately? And is there anything a pollster can do about it?

The key to getting it right is correctly identifying those voters who are likely to change their minds, and then following up close to the ballot day. But really, I’m not sure of the value of predicting an election outcome 24 hours before an actual election. Why would you do it? If you’re running a campaign, you’ve run out of time. And if you’re a news organization looking for last-minute infotainment, I’m not sure how this contributes anything to society.

Any other main takeaways from what happened in 2016 that the public and pollsters should be mindful of?

Pollsters tend to overlook less-literate, less-educated voters. They are harder to reach and have less patience with interviews that are conducted online or on the phone (i.e., not face to face). So those voters are often your wild card. If they show up. [end]

What did you think of this story?

Your feedback after we publish a story helps ensure we're always improving our reporting to better serve you